The Reality of Multi-Supplier ADAS Development

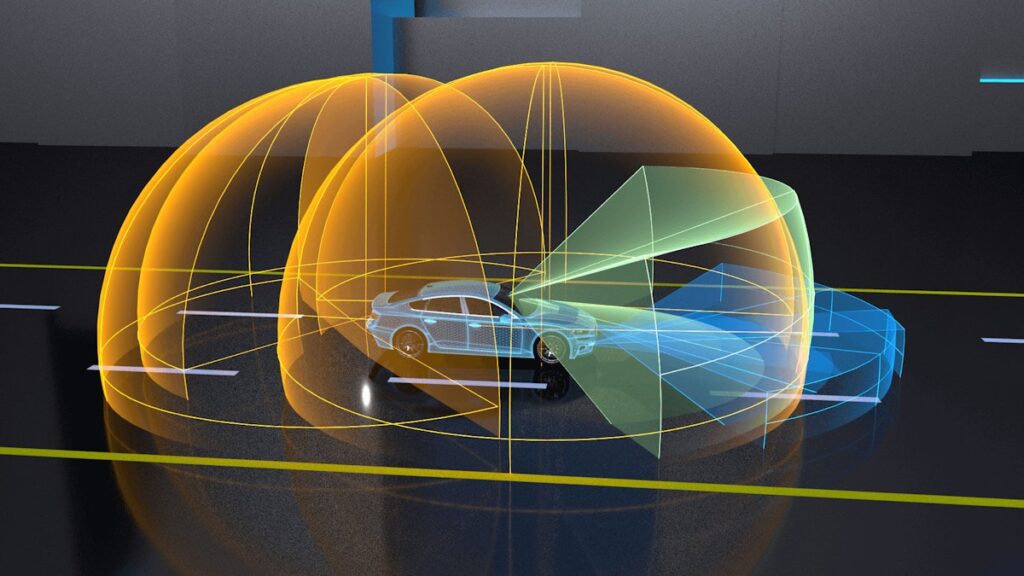

Advanced Driver Assistance Systems (ADAS) have evolved rapidly — from isolated driver aids to complex software-intensive systems.

These systems now operate at the intersection of perception, decision-making, and vehicle control. Alongside this evolution, the structure of ADAS programs has changed just as dramatically. Multi-supplier ecosystems are now the norm, not the exception.

While this model enables specialization and scalability, it also introduces a persistent and often underestimated challenge: software validation complexity. Despite mature tools, established standards, and increasing investment, many ADAS programs continue to struggle with late defect discovery, unclear ownership, and validation gaps that only surface during integration.

This is not a tooling problem. It is a structural one.

The Reality of Multi-Supplier ADAS Development

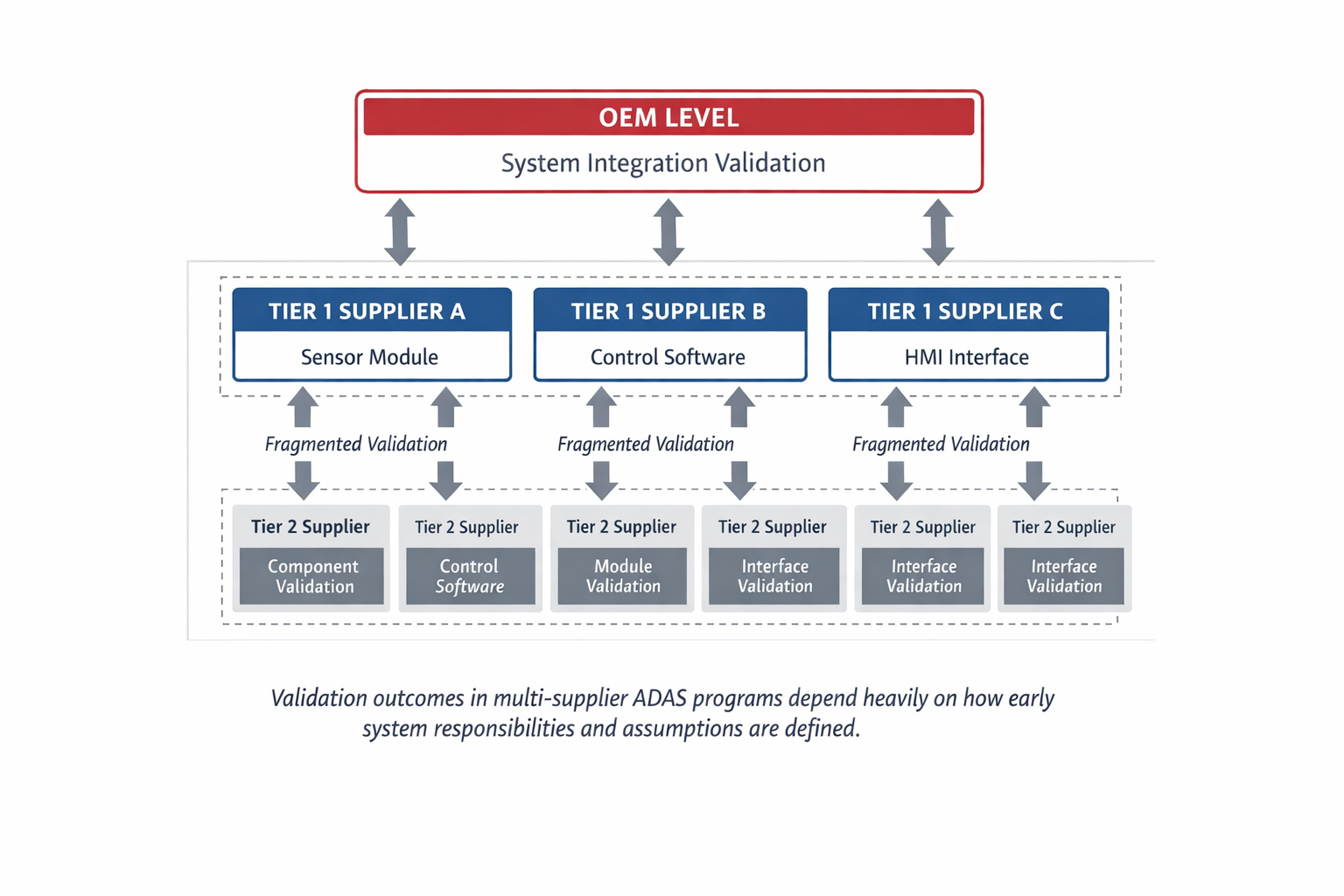

In a typical ADAS program, software responsibilities are distributed across OEM teams, Tier-1 suppliers, and multiple Tier-2 or niche technology providers. Each party operates within its own delivery model, development cadence, and interpretation of requirements.

This structure reflects both specialization and scale. Modern ADAS stacks often combine perception software, sensor integration, decision logic, and vehicle control layers delivered by different suppliers.

On paper, this division appears manageable. Contracts define scope. Interfaces are documented. Validation responsibilities are assigned. In practice, however, validation becomes fragmented across organizational boundaries.

Each supplier validates what they own. The OEM assumes system-level assurance will emerge from aggregation. And integration validation—where most critical ADAS failures actually occur—often falls into a grey zone where no single party feels fully accountable.

The result is a program that appears healthy at the component level, yet fragile at the system level.

Why Validation Breaks Down Despite “Mature” Processes

Many ADAS programs follow well-established development frameworks. Unit testing is thorough. Supplier validation reports are comprehensive. Compliance artifacts are delivered on schedule.

And yet, integration phases reveal:

- Inconsistent assumptions between software components

- Incomplete test coverage at system boundaries

- Behaviours left unvalidated due to unclear ownership

This happens because validation strategies are often designed in isolation, mirroring the supplier structure rather than the system architecture.

When validation mirrors organizational silos instead of functional dependencies, risk accumulates silently.

Common Failure Patterns in ADAS Validation

Across multi-supplier ADAS programs, several failure patterns appear repeatedly:

1. Late Discovery of Integration Defects

Issues related to timing, data synchronization, or degraded sensor inputs are often uncovered only during vehicle-level testing. At that stage, resolution is expensive, politically sensitive, and schedule-critical.

2. Inconsistent Definitions of “Validated”

One supplier’s definition of acceptable performance may differ significantly from another’s. Without a shared system-level validation framework, these differences remain hidden until integration.

3. Over-Reliance on Supplier Evidence

OEMs often inherit validation artifacts without sufficient visibility into underlying assumptions. The evidence may be technically correct—but incomplete in a system context.

4. Validation as a Milestone, Not a Discipline

Validation activities are frequently aligned to project milestones rather than treated as a continuous engineering discipline. This encourages deferral of difficult questions.

None of these failures stem from lack of effort. They stem from misaligned validation ownership.

The Governance Problem No One Wants to Own

In multi-supplier ADAS programs, validation responsibility is rarely ambiguous on paper—but often ambiguous in reality.

Suppliers are incentivized to validate their deliverables efficiently and within scope. OEMs are incentivized to manage cost and schedule while integrating outputs from multiple parties. System-level validation, however, does not map neatly onto contractual boundaries.

As a result:

- OEM teams assume suppliers will “cover their part”

- Suppliers assume the OEM will handle integration validation

- Critical system behaviors fall between the cracks

This governance gap is particularly risky in ADAS, where emergent behavior—how components interact under edge conditions—matters more than isolated correctness.

Shifting Validation Left: What Actually Works

“Shift-left validation” is often discussed, but rarely implemented effectively in complex ADAS programs. Moving validation earlier is not about running more tests sooner — it is about making system assumptions explicit earlier.

Effective approaches include:

System-Level Validation Ownership

Assign clear ownership for system behaviors, not just components. This role must have the authority to question supplier assumptions and enforce cross-boundary validation.

Early Interface and Assumption Tracking

Interfaces are more than APIs. They include timing, performance expectations, data quality, and failure modes. These assumptions must be documented, validated, and revisited continuously.

Validation Frameworks Aligned to Risk

Not all ADAS functions carry equal risk. Validation effort should be prioritized based on safety impact, complexity, and uncertainty—not evenly distributed across all components.

When validation strategy follows system risk rather than organizational convenience, defects surface earlier and with less disruption.

A Practical Decision Framework: When to Validate What

One of the most effective ways to reduce validation overload is to distinguish clearly between validation levels:

- Unit validation: correctness of individual software components

- Integration validation: correctness of interactions between components

- System validation: correctness of vehicle-level behavior under realistic conditions

Problems arise when these levels are blurred or duplicated. A disciplined program defines:

- What must be validated at each level

- Who owns each level

- What evidence is sufficient to progress

This clarity prevents both over-testing and under-testing—two common failure modes in ADAS programs.

What ADAS Program Leaders Can Do Differently

For leaders overseeing multi-supplier ADAS programs, several practical actions make a disproportionate difference:

- Ask early: Who owns system-level validation outcomes?

- Challenge assumptions embedded in supplier validation artifacts

- Require explicit validation of cross-boundary behaviors

- Treat validation findings as system feedback, not supplier failures

Most importantly, recognize that validation is a leadership responsibility, not just a technical activity.

Validation as a Program Discipline

As ADAS systems continue to grow in complexity, validation can no longer be treated as a downstream checkpoint. It must be embedded into program governance, system architecture decisions, and supplier engagement models.

That discipline often determines whether integration phases remain predictable or become program recovery efforts. They align validation strategy with system risk, make assumptions visible early, and assign ownership where it matters most.

In multi-supplier ADAS environments, this discipline is not optional. It is the difference between predictable delivery and late-stage firefighting.

Author Bio

Vhyvhitavya Vadlamani works in engineering program development and strategic business initiatives across automotive and aerospace sectors. His experience includes multi-supplier delivery environments, system integration challenges, and software-intensive engineering programs. He focuses on the intersection of engineering discipline, program governance, and market development for complex technology organizations.